New to KubeDB? Please start here.

Distributed MariaDB Compute Resource Autoscaling

This guide will give an overview on how KubeDB Autoscaler operator autoscales the compute resources i.e. cpu and memory of a distributed MariaDB cluster using mariadbautoscaler crd.

Before You Begin

- You should be familiar with the following

KubeDBconcepts:

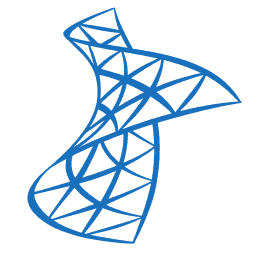

How Compute Autoscaling Works

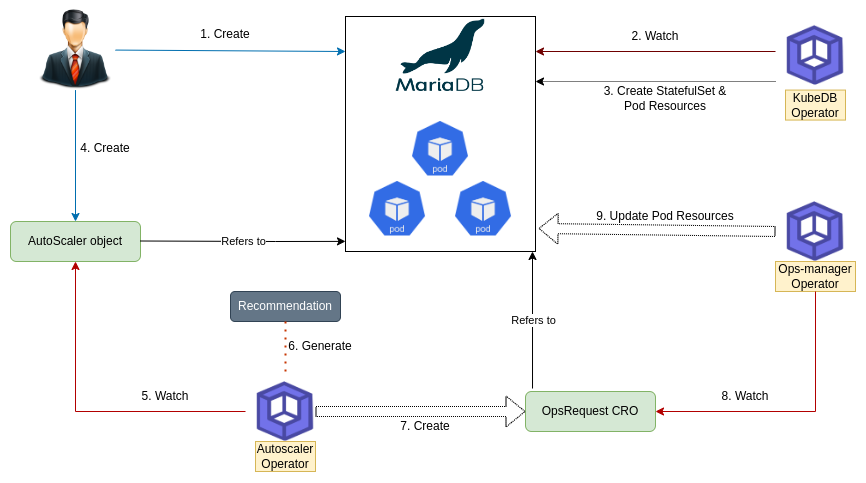

The following diagram shows how KubeDB Autoscaler operator autoscales the resources of MariaDB database components. Open the image in a new tab to see the enlarged version.

The Auto Scaling process consists of the following steps:

At first, the user creates a

PlacementPolicyCustom Resource (CR) withmonitoring.prometheus.urlconfigured for each spoke cluster. This allows the autoscaler to scrape metrics from the Prometheus instance running in each spoke cluster.The user creates a

MariaDBCustom Resource Object (CRO) withspec.distributed: trueand a reference to thePlacementPolicy.KubeDBCommunity operator watches theMariaDBCRO.When the operator finds a

MariaDBCRO, it creates required number ofPetSetsand distributes them across spoke clusters as defined by thePlacementPolicy.Then, in order to set up autoscaling of the CPU & Memory resources of the

MariaDBdatabase the user creates aMariaDBAutoscalerCRO with desired configuration.KubeDBAutoscaler operator watches theMariaDBAutoscalerCRO.KubeDBAutoscaler operator utilizes the modified version of Kubernetes official VPA-Recommender for different components of the database, as specified in themariadbautoscalerCRO. It generates recommendations based on resource usages by querying Prometheus endpoints configured in thePlacementPolicy, & stores them in thestatussection of the autoscaler CRO.If the generated recommendation doesn’t match the current resources of the database, then

KubeDBAutoscaler operator creates aMariaDBOpsRequestCRO to scale the database to match the recommendation provided by the VPA object.KubeDB Ops-Manager operatorwatches theMariaDBOpsRequestCRO.Lastly, the

KubeDB Ops-Manager operatorwill scale the database component vertically as specified on theMariaDBOpsRequestCRO.

Key Difference from Non-Distributed Autoscaling: For distributed MariaDB, the

PlacementPolicymust include amonitoring.prometheus.urlfor each spoke cluster’sdistributionRulesentry. The autoscaler uses these Prometheus endpoints to collect resource metrics from pods running across multiple Kubernetes clusters.

In the next docs, we are going to show a step by step guide on Autoscaling of Distributed MariaDB database using MariaDBAutoscaler CRD.